Narrative Objects

Mihai Pomarlan, UHB.

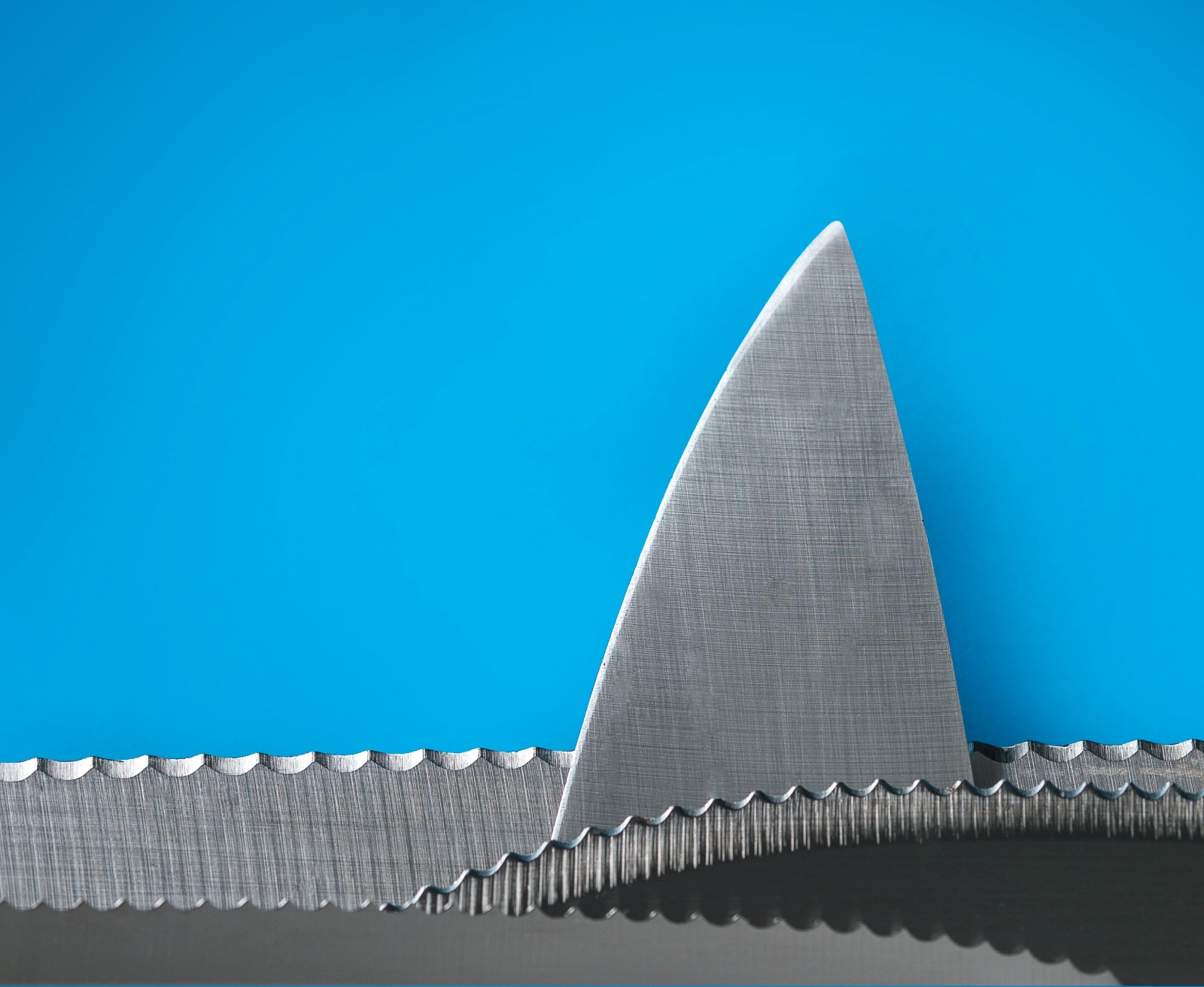

A recurring theme of AI research has turned out to be that what should be easy often is not. Consider this question – "what can I cut a stick of butter with?" You probably already thought of an answer, and if pressed, you could invent more creative ones. A string might do, or the edge of a glass perhaps if no knife is available – though you might protest if I suggested a jar's edge. It will be annoying to get the butter rests out of the threading.

You can answer such questions as the one above, and even employ some creativity in the answers, because, presumably, you have built a sophisticated model of the world and the things in it through your experience of interaction with them. You have an idea of what an object can do, and how it will change in various situations. Now that you have this model, it is second nature to reason with it. Could we implement something similar inside a robot to help us with housework? As always, let's start with moderate ambition. Could we implement a model of "normal" object use, so that our housework robot could at least give the (to us) obvious answer? We'll worry about whether a robot should be creative, and if so how, some other time.

But if all we want is a database of normal object uses, surely we have such a thing already. You could google "what can I cut a stick of butter with?" and get good answers. If google can answer it, surely a robot also can. And yet ... "what should be easy often is not". It's up to you to select which of the answers are relevant to you, so finding a result with google is often more of a collaboration than it first appears – and both partners should have some idea of the thing searched for. When I ran this experiment, the first article google found was about "cutting in butter", a technique to mix in butter with the dry ingredients for baking. This is not quite what I meant, so I can go down the list of search results to find one that tells me what I expected to find. Would a robot who did not already know how butter and cutting work be able to use these results? And what google finds is in natural language, rather than a nice, well-structured, immediately usable message for a computer program.

So let's say google is a bit too tricky for our robot, but surely there are large databases out there which cover this sort of everyday commonsense knowledge. Indeed, since commonsense reasoning is recognized as a challenge in AI, there are many efforts towards building commonsense knowledge resources – and this time, the data is represented in machine readable format. Querying and interpreting the results would be easy for a robot to do. The problem is that, were the robot to ask our cutting butter question, he's likely to not get any answer. It turns out, existing commonsense knowledge resources are mostly useful to assisst search engines towards organizing the websites they crawl through into a more semantically rich web. For example, a commonsense knowledge resource might know of many famous people, and that they were human beings, and that human beings have birth dates. Or it would know of countries, and their capital cities. Such information is useful to construct a brief summary of knowledge about an entity, and will also assist in retrieving more documents about that entity – but now we are again in the area of a search engine providing results for a human being to select from. And the human being asked for the search. "What should be easy often is not" – in this case, because if it is easy for a human there is no point in firing up a search engine for an answer. As a result, what should be a commonsense knowledge resource ends up being a resource for trivial questions instead.

This does not make existing commonsense knowledge resources useless to our robot, but we need to add some more knowledge to them first. Some of this object usability knowledge was obtained by colleagues working on a related project, which involved the development of games through which human players could reveal their preferred object combinations when performing various tasks. Some valuable information we obtained from linguistic resources that describe the roles objects can play in various kinds of events, and restrictions on what objects can play which roles. Finally, we used a cognitively motivated ontological foundation to construct our model upon, which now allows the robot to reason with it and answer questions such as "what can this object be used for?", "can this object be used for a particular task?", and "what can this object be used with when performing a task?''. Altogether, these are the existing knowledge resources we have used so far are: the CommonSense Knowledge Graph (CSKG), the linguistic resources VerbNET, WordNET, the data from the game with a purpose ToolFeud that our colleagues collected, the DOLCE Ultra Lite foundational ontology, and the SOcio-physical Model of Activity (SOMA) that we are developping in a related project. After also some manual input and corrections of data collected semi-automatically from CSKG, we obtained a new knowledge resource, SOMA_DFL.

There is always more work to be done, of course. Part of that work, ongoing at the moment, is to incorporate some causal knowledge in the SOMA_DFL knowledge base: what happens to an object if some action is performed upon it, how does the quality of an object affect the event it participates in? None of this refers to any advanced knowledge of science; what we want to have in SOMA_DFL is the kind of knowledge that is so useful, and so obvious – to human beings – that almost no one thinks worth writing down. What should be easy often is not – a robot trying to do houeswork will need that knowledge, even as it lacks a natural intuition for it.

In any case, SOMA_DFL is available at the repository https://github.com/ease-crc/ease_lexical_resources. If your robots want to know how to choose tools for some job, give it a try. If they don't find an answer, let us know!

Credits

Intro image - Photo by Stoica Ionela via Unsplash

More Articles

Can Robots Cook? Culinary challenges for advancing artificial intelligence

Anaphora Unveiled: Tracking Culinary Transformation in the Tech-Driven Kitchen

From Kitchen to AI: A Task-based Metric for Measuring Trust

Deep Understanding of Everyday Activity Commands

Curiosity-Driven Exploration of Pouring Liquids

Toward a formal theory of narratives