From digital archives to online observatories, the peaks and chasms of social-media based research Pt.2

Carlo R. M. A. Santagiustina, Venice International University.

-Continues from Part 1

Having worked in the last decade on several EU research projects, like MUHAI, which aim to study social phenomena, such as inequality perception, through user-generated data from social media, I feel the need to put you on alert. Social media, and the information they collect, are certainly formidable tools for studying and comprehending social phenomena, but, unfortunately, they are also formidable artifacts for attempting to influence and manipulate online crowds. Therefore, they should be considered critical (dual-use) technologies in relation to freedom of expression, but also in relation to social justice, peacekeeping and for the proper functioning of public institutions as well as of markets. This is especially relevant in liberal democracies that, from this point of view, are particularly vulnerable on both sides.

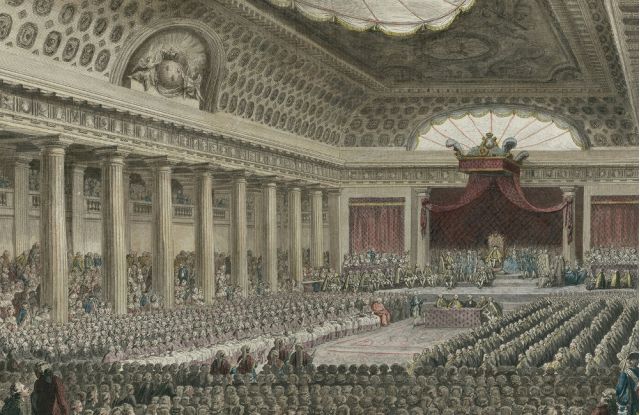

Social Media platforms can be considered the contemporary and digital equivalent of the agoras in ancient Greece, and just as in ancient agoras some people discussed public affairs while others did private business, the same occurs nowadays in these platforms. Both usages are per-se legitimate. But differently from what occurred in the past, nowadays citizens, representatives, institutions, and civil society have little, if no, control on how these private online agoras are employed, and on the scopes for which the data generated by citizens is sold to (and used by) third parties, for political, social or commercial exploitation. Social media companies are certainly required to comply with privacy and data protection regulation, which may partially protect users, as single individuals and organizations, but certainly this type of protection does not shield us as individuals belonging to, or identifying with groups or communities, based on life-styles, values, beliefs, preferences or any other form of shared identity or common behavior. Because, once user-data is aggregated and anonymized, it can be used for all sorts of purposes, from profiling, categorization and personalized advertising to political influencing campaigns targeting groups of users that are similar in terms of one or several aspects of their online behavior and identity. What is known about social media users at the aggregate level can hence be used to affect their views, preferences and behaviors. Since these platforms are populated by millions of citizens from dozens of countries, including totalitarian countries and façade democracies, many actors and organizations may have incentives to profit on top of their opacity, by using these platforms and their data for manipulating opinions, either of large and varied user populations, or, of carefully targeted influential audiences.

Virtual clashes roar, divergent views explore, data locked, voices sore.

Some social media platforms, like Twitter, have become for the aforementioned reasons virtual -but no less violent- battlegrounds. Where armies of users and bots driven by divergent interests and views, like pro-Russians and pro-Ukrainians, confront each other in harsh debates for setting the agenda of politicians and governance bodies or for influencing the opinions of consumers and citizens that use them. Other platforms, like Parler, stimulate by design the self-selection of aligned users and contents through homophily. Shared preferences, expectations and views that are sufficiently widespread among their communities, shield community insiders and their partisan convictions from the risks and wonders of communicating with people with differing opinions.

Unfortunately, in most cases, the growth of these private platforms and of the techniques for analyzing the rich and big data generated by their userbases, was followed by further restrictions for freely accessing the latter for non-profit research purposes. The recent diffusion and commercial success of some general-scope LLMs, which can also be trained using textual data from social media, has unfortunately exacerbated this process of user-data privatization and monetization. This is especially problematic for social science research and other non-profit projects, like AquaGranda: a Digital Community Memory, which, given their nature, do not (and cannot) aim to generate a profit from the usage of social-media data, and therefore have great difficulties in covering data access costs. This category is rather broad, and, besides researchers from academia and public research programs, it also includes researchers working for IGOs and NGOs, artists and activists, advocacy and volunteering groups and other non-profit organizations, who are also those whose activities and outcomes can possibly generate the greatest collective benefits and positive externalities for the public.

Short-circuiting non-profit research programs that study social, political, economic or socio-natural phenomena through the data generated by users on social media is easier than it may seem, and some platform managers and shareholders may want to do it simply to ask (data-) protection money also to academia, for continuing projects already underway.

Social media data policy changes are not simply due to privacy and data protection regulations and related concerns of the platforms that collect user-generated data. But rather, they often stem from the desire to further privatize and monetise the value of data contributed in exchange for no compensation by online communities, that is: your data. The data that you, your friends and your family, among others, generated by posting contents and commenting on each-others’ posts in the last two decades. Unfortunately, this process is not limited to Twitter, which recently withdrew free access to verified research projects, by deleting academic projects and their credentials from the Twitter developer portal without any notice. For example, also Reddit policies were recently changed, and this obliged the free PushShift archive to close its doors also to research projects. As strange as it may seem, Reddit moderators can still access the PushShift archive and its APIs but researchers from academia cannot. These are only two highly visible instances in a rapidly evolving social media landscape, which seems to want to make itself more and more profit-oriented and opaque.

At this point, a couple of concrete questions may be running through your mind:

- What is lost when social media platforms change their free data access policies to researchers and other non-profit organizations?

- What could occur if, because of the increasing data-access costs and constraints, civil society and citizen-science projects are denied the opportunity to see and comprehend how communities organize, debate and deliberate through social media?

(End of Chapter 2 – Find the answers in the next and last chapter)

More Articles

Study without ChatGPT… to work more wisely with AI

From digital archives to online observatories, the peaks and chasms of social-media based research Pt.3

From digital archives to online observatories, the peaks and chasms of social-media based research Pt.1

A Digital Assistant for Scientific Discovery in the Social Sciences and Humanities

Narrativizing Knowledge Graphs

Economists’ inequality narratives (on Twitter) before and after the COVID-19 outbreak

Making sense of events within a story

Talking (online) about inequality: Towards an observatory on inequality narratives